IBM发明机器学习设备,可帮助应付漫长会议

|

冗长的会议最让人烦躁。 我承认,我就是喜欢看专利文件。因为通过专利文件能了解世界上在发生什么,也能了解下疯狂的大想法如何演化成为没那么疯狂的大想法。在数字医疗领域里,这个规律尤其明显。 当然了,有些想法看起来很创新,但实际上并不是。最近风传苹果可能推出的新品,没准是戴在手腕上的血压监测设备。几乎各家都报道了,还有好几处列出最近的专利申请当依据。实际上,苹果工程师想做这种设备已经好几年了(2014年苹果就曾为“多功能腕戴装置监测血压”申请过专利)。现在专利局里堆满了与腕带式血压计类似创意的申请文件,对,说白了还不就是血压计,其中有一些几十年前就有雏形。举个例子,1993年就有“戴在腕部测量血压装置”的专利(美国专利编号No. 5,271,405)。 不过也有些创意非常有独创性,别出心裁,而且可能没那么疯狂。拿上个月公布的IBM专利申请为例,该专利为“通过机器学习促进开会者锻炼身体。” |

Long meetings are the worst. I confess: I like to read patents. It’s a great way to see what’s around the corner—to get an early gander at those big, crazy ideas that often morph into big, not-so-crazy ideas. And in the area of digital health, that seems to be especially true. To be sure, some of those ideas look radically new and aren’t. Consider the buzz of late about Apple’s rumored potential-ish introduction-maybe of a wrist-worn blood-pressure-monitoring device—which was covered pretty much everywhere, with many outlets pointing to a recently published patent application as an aha moment. In truth, Apple engineers have been dreamscaping this one for a while (see their 2014 application for “Blood pressure monitoring using a multi-function wrist-worn device”). And the patent office is filled to the proverbial gills with filings for similarly conceived wrist-wearable sphygmomanometers—like I wasn’t going to use that term—some of which date back several decades. Note, for example, this 1993 patent grant for a “wrist mount apparatus for use in blood pressure tonometry” (U.S. Patent No. 5,271,405). But then, some ideas are genuinely new. And well, fanciful. And well, maybe even not so crazy after all. Take this IBM patent application published last month, entitled: “Machine learned optimizing of health activity for participants during meeting times.” |

|

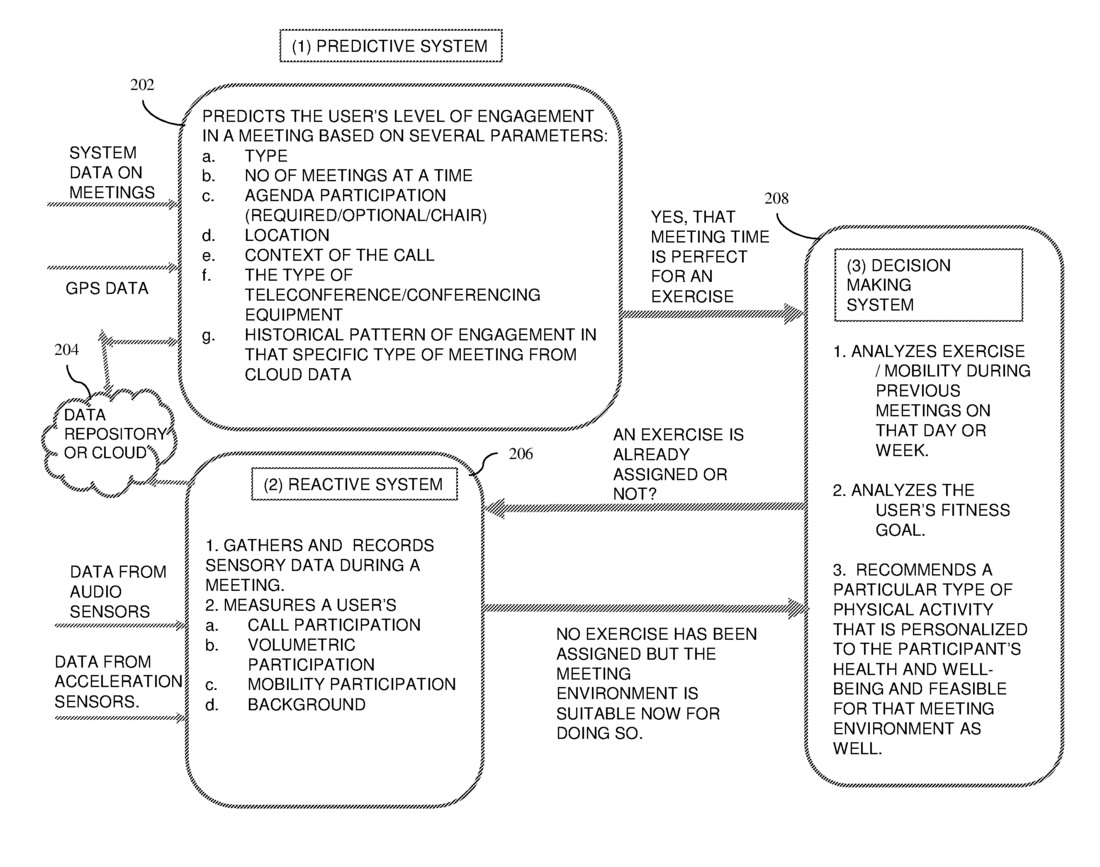

IBM技术主管保罗·R·巴斯蒂特和同事写道,该专利初衷是鼓励参与冗长电话会议的人们加强锻炼,当然得记得按下静音键。“根据预测的会议参与程度、传感器数据,以及参与者健康水平,”IBM团队在2016年11月首次提交的文件中写道,“可以确定电话会议期间适合参与者的锻炼方式。到时会向参与者发送提示信号,提醒锻炼。” 实际上,该发明中确实有不少几处提到“可能”:“可能会根据接收的数据和位置数据预测电话会议参与者的参与度。可能接收与参与者相关的传感器数据……可能将用户的可佩戴装置与智能手机连接,一起实时监测电话会议中用户的参与度。可能直接识别会议期间用户有没有移动实现,也可间接一些,通过智能手机中安装的应用分析手机使用情况(例如,用电话音频传感器监测用户参与会议讨论的频率)。” 虽然有很多假设成分,还是得称赞IBM充满想象力的工程师们,能发现这样一个重要的未满足需求,还想出解决方案。一想到下次要开漫长的会,我的背就犯疼,膝盖吱吱作响,眼皮也开始下垂犯困。如果在手机里多装个窃听器式的应用,开会时经常提醒着站一站,或坐在椅子里抖抖甩甩,少受些久坐之苦,那也值了。(财富中文网) 译者:Charlie 审稿:夏林 |

The idea, write IBM Technical Leader Paul R. Bastide and colleagues, is to prompt participants in a lengthy conference call to exercise—presumably with the mute button on. “Based on the predicted engagement level, the sensor data and the participant’s fitness goal,” write the IBM team in their initial November 2016 filing, “an exercise for the participant to perform during the conference call may be determined. A notification signal may be transmitted to the participant to perform the exercise.” There are, indeed, quite a few “may be’s” in this proffered invention: “An engagement level of the participant that is to participate in the conference call may be predicted based on the received data and the location data. Sensor data associated with the participant may be received…A user’s wearable fitness device may be interfaced with the smartphone, and together they detect in real-time the user’s participation in the conference call. This may be achieved explicitly by detecting whether the user is moving or not during the meeting, and/or implicitly via the app in the smartphone that analyzes the phone’s usage pattern (e.g., how often the user is engaged in the meeting using the phone’s audio sensors).” Such hypotheticals aside, though, one has to admire the instinct of these imaginative IBM’ers to see a critical unmet need and try to fill it. The mere thought of my next endless meeting makes my back ache. My knees creak. My eyelids droop. If it takes another eavesdropping smartphone app to come up with a few standing reps or seat-borne shake-outs to subdue the scourge, so be it. |